Building a Systematic Trading System With AI (Post #3: Building the Backtesting Engine)

From Signals to Tradable Positions

This post is part of a series in which I’m documenting the process of building a functional trading system from scratch using agentic AI. Post #1 was about building the data pipeline (focused on futures for now). Post #2 covered the design of the infrastructure to handle trading rules (one trading signal for one instrument), trading subsystems (combinations of trading rules for one instrument) and trading strategies (combinations of subsystems for multiple instruments). This post is about the implementation of the backtesting engine. But before I get into that, a quick update.

Stuff Breaks

This series is happening in real time. At this stage, my systematic trading app/platform already “works”. I can:

Run a daily routine to update historical data

Create strategies with different trading rules and backtest them

Connect to my broker

Execute orders

Keep track of positions by strategy/instrument and automatically reconcile them with broker positions.

I have been paper trading a set of strategies on a group of futures for a few weeks. However, some events have happened that have caused me to make adjustments as I go along:

Data update was still fragile. Depending on when during the day I ran the update routine, I was ending up with incomplete bars for the current trading session. The expected behavior was that, on the next update, the system would complete those bars, but this wasn’t working, so I had to make some changes. It’s much more robust now, and I include some automated checks to ensure everything is ok.

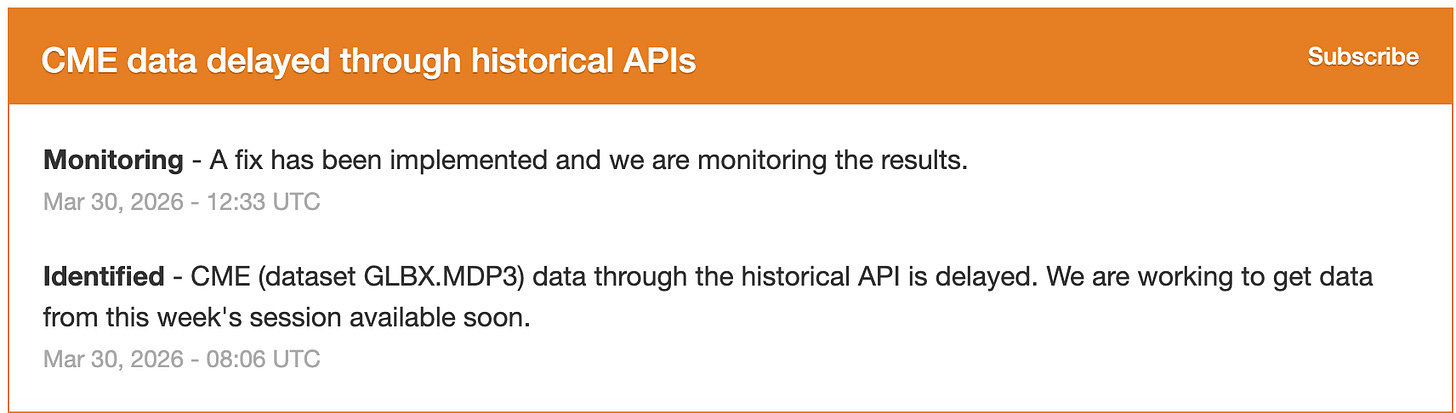

The data provider ocasionally has its own issues, which made me think about redundancy. For the goal of this project, I’ll keep it as is for now though, as I’m aiming for zero cost.

In the process of dealing with the data issue, I decided at one point to do a clean reset of the DB to reingest all historical data. However, the DB also contained metadata on saved strategies, including the ones I was paper trading. This was, of course, my fault for not having considered it, but after this, I decided to save strategies in a separate DB to avoid this kind of issue. I also implemented some tools for backups.

The Backtesting Engine

At first glance, backtesting sounds simple. Given a price series and a trading rule, it is easy enough to write a few lines of code that generate positions and compute returns. But that kind of toy backtest is not a backtesting engine. An actual engine has to combine signals across rules and instruments, translate them into tradable contracts, apply volatility targeting and transaction costs, and do all this in a way that remains consistent with how the strategy would actually be traded. The danger of toy backtests is not just that they are simplistic, but that they can give you misleading confidence about whether the strategy can actually be traded.

What the Backtesting Engine Needs to do

For this project, the backtesting engine has to solve a very specific problem. It must:

take continuous futures series as the inputs for signal generation

allow multiple trading rules per instrument

combine those rules into an instrument-level subsystem

combine multiple subsystems into a portfolio strategy

transform signals into futures contracts

apply volatility targeting

incorporate transaction costs

handle instruments with different data histories

The backtesting engine is not just producing an equity curve. It is acting as the layer that connects research signals to tradable positions, allowing realistic calculation of simulated P&L/returns. This is also slightly different from the usual backtesting approach used in academic papers, which are mostly focused on returns, not necessarily P&L, and which mostly focus only on weights, not position sizes.

In sum, the engine has to make decisions about hierarchy, position sizing, rebalancing, and contract-level implementation.

Signal Generation vs Position Sizing

A second important design choice was to separate signal generation from position sizing. A trading rule should tell us something about direction or conviction. It should not decide final leverage.

Some rules naturally produce discrete signals such as:

Others naturally produce continuous signals bounded in some interval such as:

But regardless of whether a rule is binary or continuous, the rule output is only an input into the portfolio engine: it is not yet the final position.

For this project, the cleanest approach was:

trading rules produce signals,

subsystems combine signals,

the backtesting engine converts the resulting signal into futures positions.

Futures

With ETFs or stocks, one can often get away with backtesting directly on adjusted price series. Futures are less forgiving. For futures strategies, at least three separate objects matter:

the continuous series used for signal generation,

the actual contract being traded at a given point in time,

and the contract specifications needed for P&L and risk sizing.

This forces the engine to keep several things separate that are often conflated in simpler systems. For now, the signals of the strategies I’m considering are computed on continuous series. But positions must ultimately be expressed in real contracts, each with its own price and multiplier.

That means a backtest engine for futures has to answer two distinct questions:

What is the signal on the synthetic continuous series?

What does that imply in terms of contracts in the currently tradable maturity?

For now, I’m relying on continuous series, which for strategies like trend following or mean reversion, provide a very good approximation. In a future version, I plan to modify the engine to work directly with each instrument’s contract chain, which will also allow me to incorporate other types of strategies that require trading more than one contract for the same instrument, like carry.

Position Sizing and Volatility Targeting

The most important part of the engine is probably how it sizes positions. The engine currently supports two broad approaches:

per-subsystem volatility targeting,

portfolio-level volatility targeting with covariance scaling.

Per-Contract Notional and Dollar Volatility

For an instrument indexed by i, define current futures price and contract multiplier as Pi and mi. The notional value of one contract is then Ni = Pi mi. Let annualized return volatility be σi, ann .Then annualized dollar volatility per contract is:

This converts percentage price risk into dollar risk per contract, which is the quantity the engine needs for sizing.

Per-Subsystem Vol Targeting

Under the simpler approach, each subsystem is sized independently. Suppose account equity is A and the target annualized volatility is σ*.If the subsystem signal is Si ∈ [-1,1]. Then the float number of contracts is approximately:

The backtest then rounds this to an integer contract count.

This is simple and intuitive, but since it ignores cross-asset correlations, it will undershoot target volatility.

Portfolio-Level Vol Targeting

A more interesting case is portfolio-level volatility targeting. Here, we first build inverse-volatility base positions. Suppose each instrument also has a risk budget weight wi. Then the base float contracts are:

This gives a risk-balanced composition (although it still ignores correlations). To translate this into portfolio weights, we use:

Let the annualized covariance matrix of returns be Σ. The portfolio volatility is then:

To hit the target, the engine computes a global scaling factor:

and rescales the base contracts:

Only after this step are contracts rounded. This last point is important, because rounding too early produces systematic distortions, especially when capital is small relative to contract size or when many instruments are competing for limited risk budget.

I’m also going to implement a full risk budget/parity option soon.

Rebalancing Frequency

The system currently handles strategies which compute signals daily. To make backtesting more flexibile, the system can simulate trading at a lower frequency, such as weekly or monthly. This matters because the research frequency of a signal and the trading frequency of a strategy do not always need to coincide. It will also come in handy later for my other book, which has tactical allocation strategies with ETFs that rebalance monthly or weekly.

Transaction Costs

Any realistic futures backtest needs to model trading costs in contract space.

The app currently incorporates two simple but useful cost components:

commission per contract,

slippage in ticks.

These costs are applied whenever the position changes, not only when the sign of the signal flips. That matters because a volatility-targeted system may resize positions even when its directional view remains unchanged.

Commission cost is proportional to the absolute contract change |Δni|, whereas slippage costs depend on: |Δni| x tick value. This is still a fairly simple model, and I still need to incorporate rolling costs.

Trading Micro Futures

For some futures contracts, different versions exist, typically with different multipliers. The larger contracts usually have longer histories and better quality data. For example, micro Bitcoin futures (MBT) started trading later than the mini contracts BTC. Similarly for ES vs MES. I added functionality that allows me to use the larger contracts for signal generation and simulate trading with the micro by adjusting the contract multipliers and customizing cost assumptions.

What Broke While Building It

This part is worth emphasizing because it says something about both the engineering challenge and the use of AI for development. A backtesting engine sounds easy until one starts dealing with the boundary between signal generation and actual trading logic. Some of the issues I ran into included:

I discovered bugs in the contract roll logic for continuous series due to weird backtested P&L,

Rounding positions too early was causing issues in some edge cases,

Inconsistencies between signal contracts and execution contracts

These were not cosmetic bugs: they reflected places where the internal logic of the system was not yet fully coherent. This was also one of the places where working with an AI agent was most revealing. The agent was very good at producing working code quickly, but consistency across the entire pipeline had to be enforced through repeated testing, inspection, and revision

What the Engine Still Does Not Do Perfectly

At this stage, the backtesting engine is good enough to support serious experimentation, which is all I need for now. It is not “finished,” and probably never will be in any absolute sense. But it is now coherent enough to connect research signals to tradable positions without relying on the kinds of shortcuts that make toy backtests misleading.