Building a Systematic Trading System With AI (Post #4: Building the Trading Layer)

From Signals to Orders and Final Thoughts on the Project

This is the fourth and last post of a series in which I’m documenting the process of building a functional systematic trading system from scratch using an AI agent. Previous parts are here:

Once the data pipeline and backtesting engine were in place, the next challenge was operational. At that point, the app could already process historical futures data for signal generation and backtest strategies under fairly realistic assumptions. But neither of those things is enough to trade a strategy in practice. That requires a different layer of the system.

The trading problem can be stated very simply:

How do we take a signal generated by a model and turn it into an actual position held at the broker, while keeping the process observable, reviewable, and safe?

This quickly expands into a chain of distinct steps. Strategies produce signals. Given an account size and choices about how to combine them to achieve some target risk, the signals need to be turned into target positions. The target positions then need to be compared with what is currently held in the account. That comparison produces one or more orders that, once executed, change the state of the portfolio, which in turn determine future P&L.

In other words, the trading layer is really about managing the transformation

Signals →Target Positions→Orders→Actual Positions→P&L

in a way that maintains internal consistency.

From Signals to Targets

The first step is to decide what the strategy wants to hold based on a signal. In the app, signals are generated by trading rules, then combined inside subsystems, and finally combined into a strategy and translated into desired positions by the portfolio engine. The output of this stage is not an order but a target: the number of contracts the strategy would like to hold for each instrument.

Conceptually, if the strategy signal for instrument i is Si and the sizing engine determines that one unit of signal corresponds to some risk-scaled contract quantity, then the target can be written schematically as

The exact form of the function depends on the sizing logic. While the distinction between signal and position may seem obvious, it’s important in order to avoid confusion between the two layers.

The strategy I adopted in this project is typical of many systematic trading setups. We take a snapshot of the current market state and append it, in effect, as the latest observation for the purpose of computing the current signal, which then is turned into a target position as described above.

Once the app computes targets, it has to decide what to do with them. The current setup is semi-automatic: the app treats targets as snapshots. A target run is computed, saved, and then reviewed. Execution then operates on that persisted snapshot rather than silently recomputing the strategy again at order time. This keeps the trading decision being executed explicit and auditable. Obviously, if we were to move into higher frequency strategies, automation and speed would be much more important.

From Targets to Orders

Once a target is known, the next step is to compare the target with the current position. If the desired position is ni* and the current position attributed to the strategy is niB, then the order quantity is

Again, this is conceptually simple, but there are some important details. The app is not generating trades directly from signals, but from the difference between desired and current state. This also means that the app need to be able to read broker snapshots and reconcile positions with one or more strategies. Without a reliable view not only of current broker holdings, but of what each strategy is holding, the app cannot compute meaningful order deltas.

From Orders to Positions

Making the app connect to my broker’s API was extremely easy (despite a few glitches related to a well-known issue in Interactive Brokers’s API running inside a Streamlit environment). But the trading layer is more than a wrapper around the broker API. Once orders are submitted, the app has to update its view of what the account actually holds. It has to compare that with what the broker reports and decide whether the internal state and the external state are still aligned.

This is especially important because the broker account only knows account-level positions, ie it does not know about the internal hierarchy of rules, subsystems, and strategies that exists inside the app. So if several strategies are meant to coexist in the same account, the app has to maintain its own strategy-level accounting. That means the system needs to be able to retrieve broker positions, and attribute them internally to one or more strategies. The latter part needs to be coherently taken care of by the app. Without this internal attribution layer, a multi-strategy system running in one account becomes impossible to monitor coherently.

From Positions to P&L

Given existing positions, we would like to measure P&L to monitor the system. In a futures systems, this means marking positions to market using current prices and contract multipliers. The calculation is relatively straightforward, but the system needs to maintain a coherent chain from executed positions to current account state to P&L. Without that, the system cannot really be monitored.

The broad logic is simple enough, but the difficulty is in all the boundary conditions around it. Signals are generated on continuous futures series, but orders must be placed in actual tradable contracts. This means the app has to distinguish between the instrument used for signal generation and the instrument used for execution. I’ve also implemented a feature that allows the user to change the mapping of the contracts used for signal generation and trading. For example, I may use BTC for signal generation but execute on MBT.

One important detail was to make sure that the app would not break in case of unattributed positions. Since I have other positions in my brokerage account that have nothing to do with the system, I had the agent implement logic to put these aside in an “unattributed” bucket, which allows me to quickly check anything that is not internally attributed to a strategy.

That is what makes the trading layer a engineering problem rather than just an API integration (which the AI agent did very efficiently).

Monitoring Exceptions

Real life is messy, and it is not possible to plan for every eventuality. However, we can and should carefully monitor what happens along the way, and the app should flag any situations that seem anomalous. My app ended up with separate monitoring and exception views to keep track of whether the system is currently in a trustworthy state.

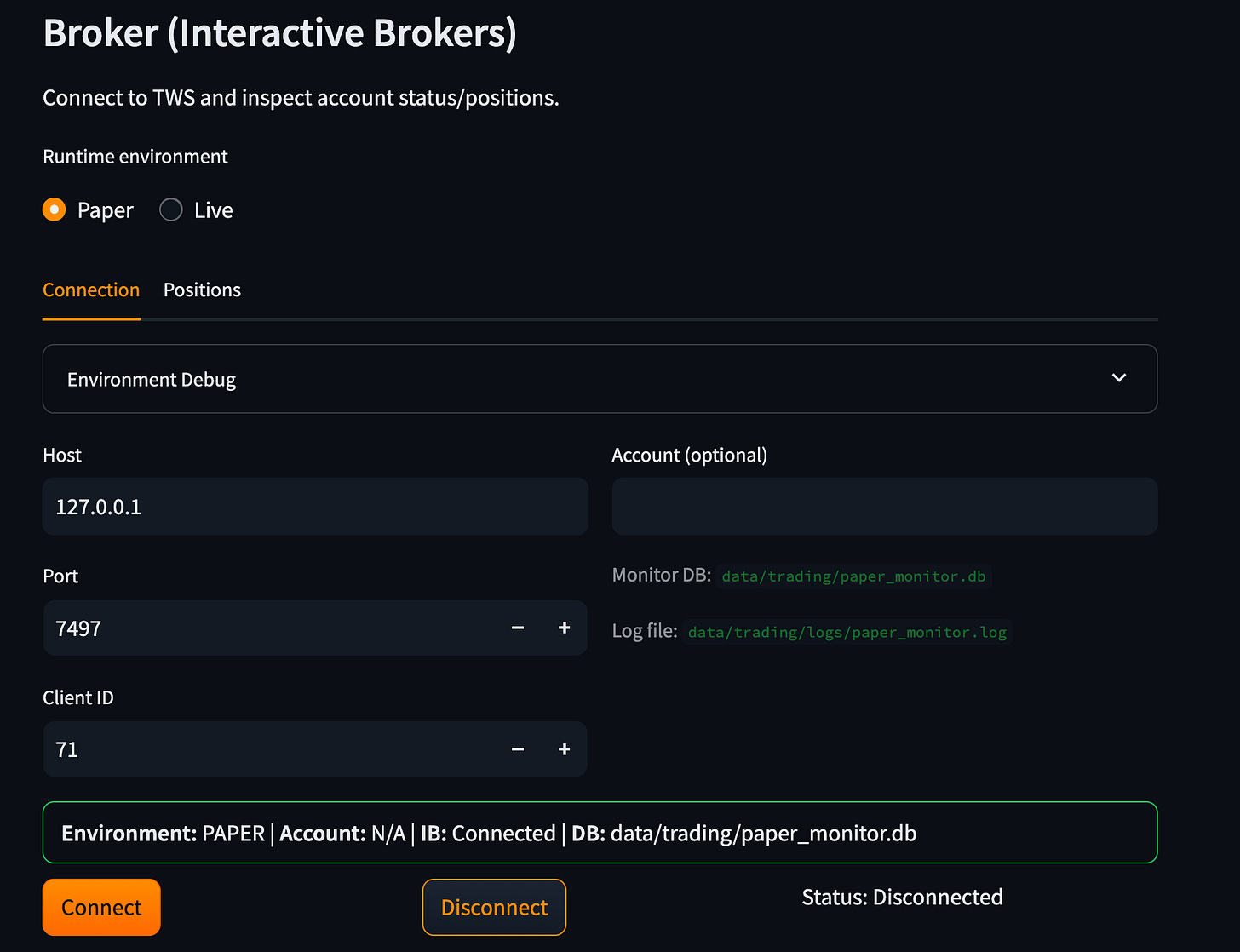

I did (and do) intend to use this app for real trading. For this reason, paper trading is essential, as it reveals many of the operational issues that can happen. To avoid any risk of mixing paper and live trading, I implemented a strict segregation between the two: each has its separate state, separate storage, separate logs, and separate broker connections.

This part of the project also made the limitations of AI-assisted coding very visible. The difficulty was not in coding, but in making sure the entire chain was logically coherent, due to the many details involved in even a modestly complex trading system. Testing strategies in paper trading revealed several issues and allowed me to fix them safely.

What Still Needs Work

So this is the end of the series, but not really the end of the project. At this stage, I’ve been paper trading strategies, but I still don’t trust it enough to put real money on the line. Some of the things in the list are:

The system still runs locally on my laptop, with a local database. That is fine for development and paper trading, but too fragile for serious live use. A more robust deployment and storage architecture is still needed.

Incorporate a complete workflow for ETF strategies so I can automate the tactical allocation strategy that I use.

Improve the backtesting engine to use contract/expiry level data for futures. Although the continuous futures series are good enough to backtest trend following and mean reversion strategies, using the right expiries is more realistic and will allow me to more correctly model rollover assumptions, as well as test strategies like carry, which require trading in more than one contract.

Maintenance tools: if something goes wrong (say, I decide to manually close a position), there should be a way to provide that information to the system. Of course, I can tell the agent to fix it, but the app should be able to do this independently.

Improve the overall robustness of the system, including better P&L and strategy monitoring.

Further automation of data update and signal generation.

What This Project Taught Me

I had very little experience working with AI agents when I started this project. In the process of building the app, I learned enough about how to scope, guide, and iterate with them that part of me is tempted to start over and rebuild it from scratch with that knowledge in hand. I’ve been experimenting with agents on other projects as well, and both the speed and the quality of what I can produce keep improving.

One lesson in particular has become very clear to me: it pays to spend more time upfront defining the context, clarifying the scope, and working with the agent to produce a coherent plan before any coding begins. I’ve found it especially useful to instruct the agent to keep asking questions until it has clarity on all relevant points.

Over the course of building this app, the AI agent was extremely useful. It could write large amounts of code quickly, scaffold interfaces, refactor workflows, build database layers, and implement features at a pace I could never have matched on my own. While I’m quite comfortable with trading strategies, backtests, and portfolio construction, my knowledge of database architecture and UI development is rudimentary at best. In that sense, AI significantly expanded what I could realistically build.

This project also reinforced for me that AI is not a substitute for domain knowledge. It can accelerate development dramatically, but it is most useful when we can provide detailed specifications of how things should work and still detect problems even when, superficially, things look correct. There were very few cases where the code itself was the main problem. Most bugs arose because I had not provided enough detail about the desired behavior, or because the problem being tackled turned out to be more complex than it initially appeared. In other cases, the code would run and the results would seem reasonable, yet still be conceptually wrong in ways that were not obvious unless I inspected the logic directly or questioned the agent. These are the kinds of mistakes that can get lost in “vibe coding.” In that respect, developers have a real advantage when using these tools, because they are used to thinking in terms of specifications, tests, and edge cases.

I began this series with a simple question:

Can an AI agent build a functional systematic trading system from scratch?

My answer is yes, but with some caveats. AI can greatly accelerate the process, but the quality of the result still depends heavily on the human component. I was able to build a prototype that is close to functional for my own purposes, although I would still want much more testing and validation before trusting it with real money. Even so, it remains far from anything I have used, or would consider using, in a professional setting. Now, I’m not a professional developer, and I’m well aware of my limitations in that regard. I have no doubt that a professional developer using AI agents could build something orders of magnitude better.

So that, at least, is what this project taught me. AI made it possible to build much more, much faster, than I could have on my own. AI agents have effectively removed the speed of code creation as a limiting factor, but in a project like this, coherence and a vision of how the parts fit together is what matters. This still depends heavily on the human(s) in the loop.